Introduction & Overview of The ethical implications of artificial intelligence

Artificial intelligence (AI) is the field of computer science that focuses on developing intelligent machines and systems. AI involves using algorithms, data, and other techniques to enable computers and other devices to perform tasks that typically require human intelligence, such as recognizing patterns, making decisions, and learning from experience.

The Development and use of AI also raise significant ethical and social concerns.

AI has the potential to revolutionize many areas of our lives, from healthcare and education to transportation and finance. By automating routine tasks and providing intelligent solutions to complex problems, AI has the potential to improve efficiency, productivity, and quality of life. However, the development and use of AI also raise significant ethical and social concerns, such as the potential for job displacement, bias, and privacy violations. As AI continues to advance, it will be important for researchers, policymakers, and society to address these challenges and ensure that all share the benefits of AI.

Definition of The ethical implications of artificial intelligence (AI)

The ethical implications of artificial intelligence refer to the moral and social concerns surrounding the development and use of AI. These concerns can arise at various stages of the AI lifecycle, from designing and training AI systems to their deployment and use in the real world. One key ethical concern surrounding AI is bias. AI systems can be trained using biased data, which can lead to biased outcomes and unfair treatment of certain groups of people. For example, a facial recognition system trained on a predominantly white dataset may have difficulty accurately recognizing people of colour.

Background Information of ethical implications of artificial intelligence

As previously stated, several ethical concerns arise when it comes to developing and deploying AI systems. One such ethical concern is bias. By and large, bias in AI systems refers to the tendency of AI algorithms and systems to produce unfair or discriminatory outcomes. It can arise from various sources, including the data used to train the AI, the design and implementation of the AI algorithms, and the contextual factors surrounding the use of the AI.

One study found that AI systems trained on biased data can produce biased outcomes (Ntoutsi et al., 2020). For example, a facial recognition system trained on a predominantly white dataset may have difficulty accurately recognizing people of color. This can lead to unfair treatment and discrimination against certain groups.

Another study found that the design and implementation of AI algorithms can also introduce bias (Vaid et al., 2020). For instance, an algorithm designed to predict recidivism may use factors such as race and gender as input variables, even though these variables are irrelevant to the prediction. This can perpetuate existing biases and lead to unfair outcomes.

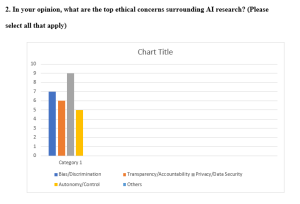

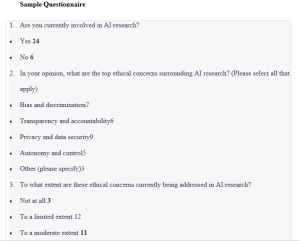

Research Methods/Strategy in ethical implications of artificial intelligence

To accomplish the study’s goals, both primary and secondary sources of information were explored. Questionnaires were utilized to collect primary data. The core data for the study came from the personnel of an IT organization, and the researcher did so using semi-structured questionnaires. In instances in which respondents can be discovered, reached, and willing to participate with the researchers, these data collection methods are utilized the most frequently.

Regarding resource use, questionnaires are fairly economical (effort, time, and money). In addition, surveys produce data that can be measured and is simpler to analyze. The questionnaire was divided into sections to make it easier for the participants to fill out the questionnaire and to make it more pertinent to the study’s aims. In sum, thirty people agreed to take part in the research.